Learning LLVM: Compiling LLVM

LLVM is a set of compiler toolchains which supports various programming languages and various target machines. Its popularity is currently rising, where according to JetBrains, around 26% of C++ developers are using it to compile C++. Although it is still far to reach the ubiquity of GCC toolchain, it is being adopted across industries and it has a very fast moving development since many of the developers are sponsored by the industries themselves. So you can experience the latest cutting-edge technology in the compiler scene by using LLVM to compile your program.

I have been using LLVM for quite a while, especially since my involvement in Tizen team at Samsung Research Indonesia. My experience with LLVM toolchain, especially with Clang frontend, has been really great. And now, I have the opportunity to go even deeper than just writing a code to be compiled with the toolchain by looking in how LLVM actually works. Therefore, I created this article, which may come into series of articles, to aid my learning process and share the knowledge I obtained by learning and experimenting with LLVM.

Preface

As an introduction, I can assure you that learning LLVM is both challenging and interesting at the same time. Just like other compiler systems which are available as open source, they have been lacking in having a straightforward tutorial or how-to documentation to aid newcomers. Basically, it seems like they expect people who are interested in digging the internals of the compiler to have necessary fundamental knowledge and be a proactive learner by reading the code directly.

LLVM is written in C++ with a very modular and extensible design. The source code itself is very highly documented, even up to the inline comments, making the code self-explanatory. It is really a good starting point for learning on how a compiler works and on the plus side, it is also not a type of experimental or academic compiler; it is a fully working compiler that you can test to compile an actual source code and execute it.

Some basic knowledge that is required are:

- Modern C++ knowledge: LLVM is written in post C++11 dialect. It uses a lot of new language features such as lambda function, for-range loop, and new standard library classes

- Object-Oriented Paradigm, especially in C++ coding pattern: There are many C++ specific idioms such as multiple inheritances and mixins. So, it is a good idea to understand C++ coding pattern first before digging deeper in LLVM code.

- Compiler Construction: It is a good idea to understand the underlying mechanism and architecture of a compiler before learning the actual implementation itself. Compiler is really big and has multiple components working together. It may be impossible to learn it overnight, but you also don’t have to learn the entire compiler components to start experimenting with the compiler source code. I currently also don’t understand the parser algorithm, syntax analyzer, and so on. But I am particularly interested in several subject such as intermediate representation, program optimization, and code generation. You can mix-and-match which details you are interested, while still understand the general architecture of the compiler as a whole. As a quick start, you can begin with the Wikipedia article, and continue with the Dragon book for more in-depth knowledge.

Requirements

Required Tools

I am using Windows 10 with Windows Subsystem for Linux WSL. Therefore, I can use Linux environment without leaving Windows, and using Windows tools although working for Linux platform. LLVM itself can be compiled in Windows, however, Linux is considered to be more “ubiquitous” in terms of system software development. This is purely a matter of taste, though.

- Linux distro for WSL: I am using Fedora Remix for WSL in my system. I prefer Fedora over Ubuntu because it has more up-to-date package repository. It is just a matter of taste, basically. Unlike Ubuntu which is officially endorsed by Microsoft, Fedora is not officially endorsed (at the moment) and no known WSL package based on the original distro. Fedora Remix for WSL, however, is a non-official Remix of Fedora which is built to run on WSL. It is provided free-of-charge on GitHub as an installable Windows App, or you can purchase it from Windows Store to support the developer.

- Visual Studio 2019: IDE to make source code browsing and debugging a lot more easier. You can also use 2017, but why using an older version while the newer one is available, and the Community version is totally free and very powerful already. Recent Visual Studio supports Linux debugging smoothly and you can integrate WSL Linux to work with Visual Studio. You can also use other IDE as your own liking, however, such as Eclipse CDT, JetBrains, or Visual Studio Code with C++ Plugin.

- Linux Packages in the WSL:

- git: Obviously

- clang: Compiler for C-language family

- llvm: Compiler backend. We still need a compiler to compile our compiler source code

- cmake: Build configuration tools

- ninja-build: Build tools that we are going to use, Makefile is also acceptable, though

- python2: LLVM build script still depends on Python2 scripts, so it is necessary to install this

- ccache: Speed up compilation by caching the previously compiled source

- ssh: For connecting the IDE and WSL to perform “remote” debugging

- gdb: The debugger

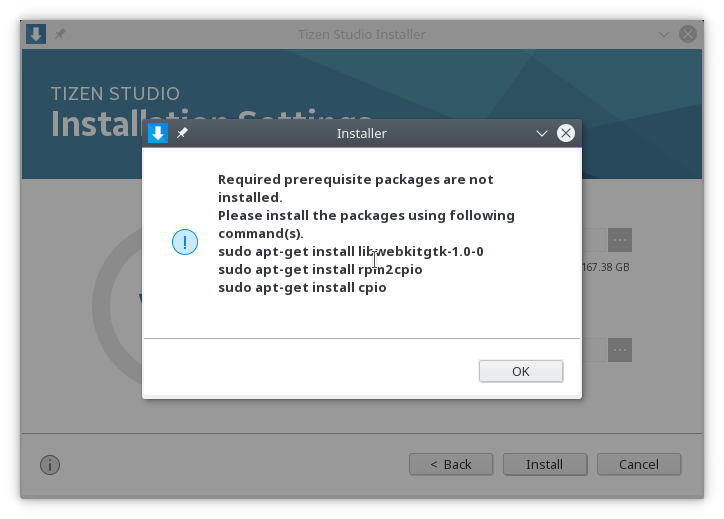

- And perhaps some other more. If there is a library missing, I think CMake script will notify you

- System Requirements:

- Disk Space: Compiling LLVM especially in debug mode will generate big binaries which will eat your disk space really fast. So, it is a good idea to reserve about 80GB for this compilation alone. Currently, my compiled LLVM folder takes around 64GB.

- Memory: The build process also requires a very big memory. 16GB is easily eaten by the build process, especially during linking process. Therefore, you should have an adequate memory to build LLVM. I think 8GB memory is not enough.

Source Code

You can obtain the source code for LLVM from GitHub:

https://github.com/llvm/llvm-project. The repository contains all LLVM-related projects including Clang.

Building the LLVM

Build LLVM using Ninja

LLVM build system is generated by CMake, so you can use any build system you like. But in this article, we are going to use Ninja. Here is some simple steps to compile LLVM using Ninja.

- Create a subfolder for your build on your

llvm folder. I tried to create elsewhere (root folder ofllvm -project) and it does not work. If you have any insightabout this, please let me know. Otherwise, it is a good idea to stick with this folder configuration. - Enter to the subfolder and

run cmakewith command below. The commands instruct to create a build script using Ninja build system, with also configuring the location of the dependent projects such as Clang andlld :

cmake -G Ninja ../ \ -DLLVM_TARGETS_TO_BUILD="X86" \ -DLLVM_ENABLE_CXX1Y=On \ -DLLVM_BUILD_TOOLS=On \ -DCMAKE_BUILD_TYPE=Debug \ -DLLVM_ENABLE_PROJECTS="clang;lld;clang-tools-extra;libcxx;libcxxabi;compiler-rt" \ -DLLVM_EXTERNAL_CLANG_SOURCE_DIR=../../clang \ -DLLVM_EXTERNAL_LLD_SOURCE_DIR=../../lld \ -DLLVM_EXTERNAL_LIBCXX_SOURCE_DIR=../../libcxx \ -DLLVM_EXTERNAL_LIBCXXABI_SOURCE_DIR=../../libcxxabi \ -DLLVM_EXTERNAL_COMPILER_RT_SOURCE_DIR=../../compiler-rt \ -DLLVM_EXTERNAL_CLANG_TOOLS_EXTRA_SOURCE_DIR=../../clang-tools-extra \ -DLLVM_CCACHE_BUILD=On \ -DLIBCXXABI_LIBCXX_PATH=../../libcxx \ -DLIBCXXABI_LIBCXX_INCLUDES=../../libcxx/include \ -DCMAKE_C_COMPILER=clang \ -DCMAKE_CXX_COMPILER=clang++ \ -DLLVM_USE_LINKER=lld

- When the build script generator is successful, you can start the build by running

the ninjacommand . - The build will be run in parallel optimized to your system capability. But sometimes, the build is overdoing it and at some

point you want to reduce it. In my case, it happened during linking where it takes tons of memory to perform linking and exhaust the memory availability and crashes thelld .Use -jmodifier to limit the job uses especially when the build crashes.Using -ldoes notnecessary this settings . - When the build is completed, the binaries are stored in the bin folder. You can directly run the executable

- Anytime you make changes in the source file, you only need to rerun the Ninja command from the build folder.

Running the compiled LLVM

The compiled binary is stored in the bin folder under the build folder. Make sure you run the correct binary by specifying the folder when typing the command. If you are currently in the build folder, use bin/clang, bin/llc, bin/opt-version

In my future post, I will explain some tips and tricks to debug LLVM (and also applicable to any Linux porgam) using Windows and Visual Studio.

Hi,

I am new to LLVM and very much interested in exploring it. I have built the sources successfully for debug using -DCMAKE_BUILD_TYPE=Debug with cmake using GNU makefiles. Now when I am trying to debug “opt” executable, I see no debug symbols. Also when I run touch command on opt.cpp and build the opt locally, still no debug. I used make -n to see if -g is passed to g++ while compiling opt.cpp and did not find the -g. Not sure why the debug binaries are not built. Could you please help em out to build and start debugging. Thanks in advance.

Also when I use gdb ./bin/opt, and set the breakpoint at main, it do not show the source file and line number for the function main. This again shows that binary is not debug built but optimized.

Hello!

Hmm that’s interesting. Have you tried using the clang as the compiler instead of gcc? In my case, I have no issue passing the build type Debug and it generates the debug symbol properly